Overcoming Decision Paralysis: UX Research for Native Auto-Embeddings

The research focused on a Cloud Platform used by developers to build, scale, and manage modern data applications. As Generative AI became a market imperative, the business sought to capture the growing AI-developer market — becoming an all-in-one AI architecture allowing developers to build RAG applications directly within the ecosystem.

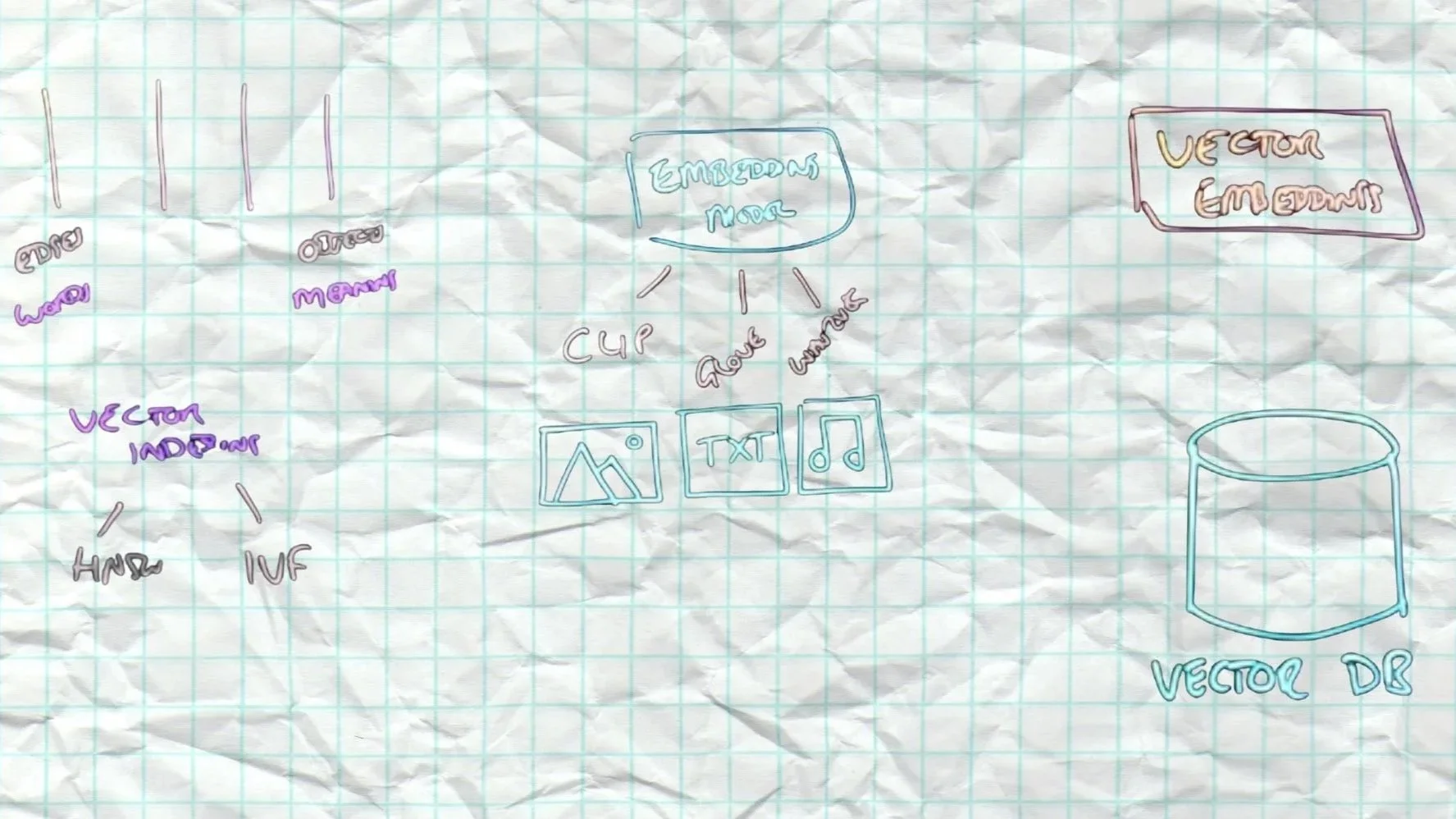

The project was initiated to improve the Cloud Platform's AI Semantic Search onboarding experience, which was experiencing high friction due to the complex, external data pipelines required to generate vector embeddings.

I conducted a comprehensive, mixed-methods research study involving exploratory user interviews and a quantitative choice modelling survey to uncover how AI developers evaluate and adopt embedding models. My recommendations are projected to:

Developers faced significant friction building AI-first search capabilities.

Building RAG applications typically forced developers to manage complex, external data pipelines just to generate vector embeddings. At the same time, building the auto-embeddings feature wouldn't guarantee adoption if developers couldn't trust the tool.

"Having the embedding model as part of the solution would eliminate the last element of 'round trip' frustration"

— AI Developer- De-risk Engineering: Validate if a native auto-embeddings feature will actually drive adoption before committing expensive development resources.

- Uncover Onboarding Friction: Pinpoint exactly where and why developers currently stall with external tools.

- Define the MVP: Identify the minimum requirements and trade-offs needed for developers to trust a native solution.

- Capture Market Share: Position the platform as a consolidated, all-in-one architecture for AI Semantic Search.

To capture both "what" (scale) and "why" (depth), I designed a two-phase study.

Quantitative Choice Modelling Survey (N=94): Rather than a standard Likert scale, I deployed a forced-choice modelling survey where users had to evaluate competing factors against one another — mathematically quantifying trade-offs and defining the hierarchy of user needs for the MVP.

Remote Moderated Interviews (N=6): 60-minute deep-dives with technical leaders (CTOs, Solution Architects, Junior AI Devs) to uncover current AI workflows, followed by evaluation of the proposed auto-embeddings workflow.

I architected the recruitment strategy around specific stages of the user conversion funnel, from those just exploring AI to those managing production workloads.

Three key findings shifted the product strategy.

Finding 1 — Two Distinct Target User Groups

Strategic Planner (Jerry): Conducts deep research before deploying, already familiar with different embedding models. Skeptical of "black box" solutions. Agile Implementer (Tomas): Lacks deep AI infrastructure confidence but is highly motivated to get started quickly. Experiences immediate decision paralysis with unfamiliar concepts.

→ Recommendation: Tailored onboarding flows for each archetype.

Finding 2 — Knowledge Gaps Drive Behaviour

Many participants found vector embedding concepts difficult to grasp. Because they lacked a methodology to evaluate model quality, developers relied on Cost as the primary decision factor — even when it wasn't the most rational one.

→ Recommendation: Mitigate cost-anxiety by emphasising zero-cost POC onboarding directly in the setup flow.

Finding 3 — Behaviour Shifts Across the Adoption Funnel

Early-stage users embraced auto-embeddings simplicity, but developers further down the funnel showed a strong urge to constantly test and swap different embedding models.

→ Recommendation: Present clear, relevant performance metrics within the UI to help users evaluate beyond simple model-swapping.

From technical integration to guided educational experience.

By shifting the product approach from a purely "technical integration" to a "guided educational experience" adapted to defined behavioural archetypes:

Challenge your assumptions about "Experts": Initially, we assumed developers building AI apps were deeply knowledgeable about machine learning. The research proved that many are "Agile Implementers" simply trying to stitch APIs together — completely changing how we designed educational scaffolding within the product.

Cost is a proxy for understanding: When users don't understand the technical nuances of a feature, they fall back on what they do understand: money. Designing for transparency around cost is just as important as designing for technical functionality.